The first sign of fraud in a regional mobility operation rarely shows up as a system alert. It shows as a minor anomaly the operator rationalizes away: a high-rated driver who suddenly generates complaints, a zone with plenty of drivers but slow assignment times, a completion rate that climbs without any improvement in passenger satisfaction. When those anomalies accumulate over weeks without intervention, what looked like a statistical inconsistency turns out to be a fraud pattern that already cost between 3% and 8% of monthly gross revenue.

This article is for operators with 30 or more active drivers and at least 60 days of operation who are starting to notice numbers that don't add up but don't know where to look. The five patterns described here are the most common in LATAM regional markets: they aren't hypothetical or drawn from large-scale contexts — they're the ones that appear when an operation with 40 to 200 drivers reaches enough volume for fraud to become financially attractive.

Ghost trips: when the service shows as completed but never happened

The ghost trip is the most frequent fraud pattern and the hardest to detect without geolocation data. The basic scheme works like this: an active driver accepts a request from a complicit passenger — in some cases an account the driver themselves controls —, marks the trip as started and then as completed, and collects the full fare without any actual movement occurring. The platform logs a completed trip. The driver gets paid. The net fraud income equals the platform's commission, which in regional operations runs between 15% and 25% of the trip value.

The most reliable detectable signal is the GPS trace during the trip. A typical ghost trip shows one of three signatures: coordinates that don't move or move within a radius of under 200 meters during the declared trip time, a trace that exits the driver's device but doesn't match any public route in the area, or a trip duration inconsistent with the distance charged. Operators who systematically cross-reference declared time with GPS distance find between 0.5% and 2.5% of trips with significant discrepancies in their first three months. Not all are intentional fraud — some are technical errors in poor-signal zones — but trips with flat or absent GPS traces warrant a direct conversation with the driver.

Coordinated surge manipulation: the fraud that looks like market forces

Demand manipulation is a collective pattern that requires coordination between drivers. The scheme works when three to seven active drivers in a high-demand zone disconnect simultaneously during a specific window — typically 15 to 30 minutes before the peak hour. The effect is mechanical: the sudden drop in active supply spikes assignment times, which in platforms with active dynamic pricing triggers the surge multiplier. Drivers reconnect once the multiplier is live and work through the inflated window.

What distinguishes demand manipulation from a genuine supply problem is its coordinated character. A real supply drop has geographic and temporal dispersion — drivers go offline in different zones at different times for independent reasons. Manipulation produces a clean-cut pattern: multiple drivers disconnect from the same neighborhood within a 5-to-10-minute window and reconnect together 20 to 35 minutes later. Filtering connection-disconnection logs by zone and time reveals that pattern clearly. In regional operations with active dynamic pricing, between 12% and 28% of surge events in high-frequency zones show a disconnection profile consistent with coordination.

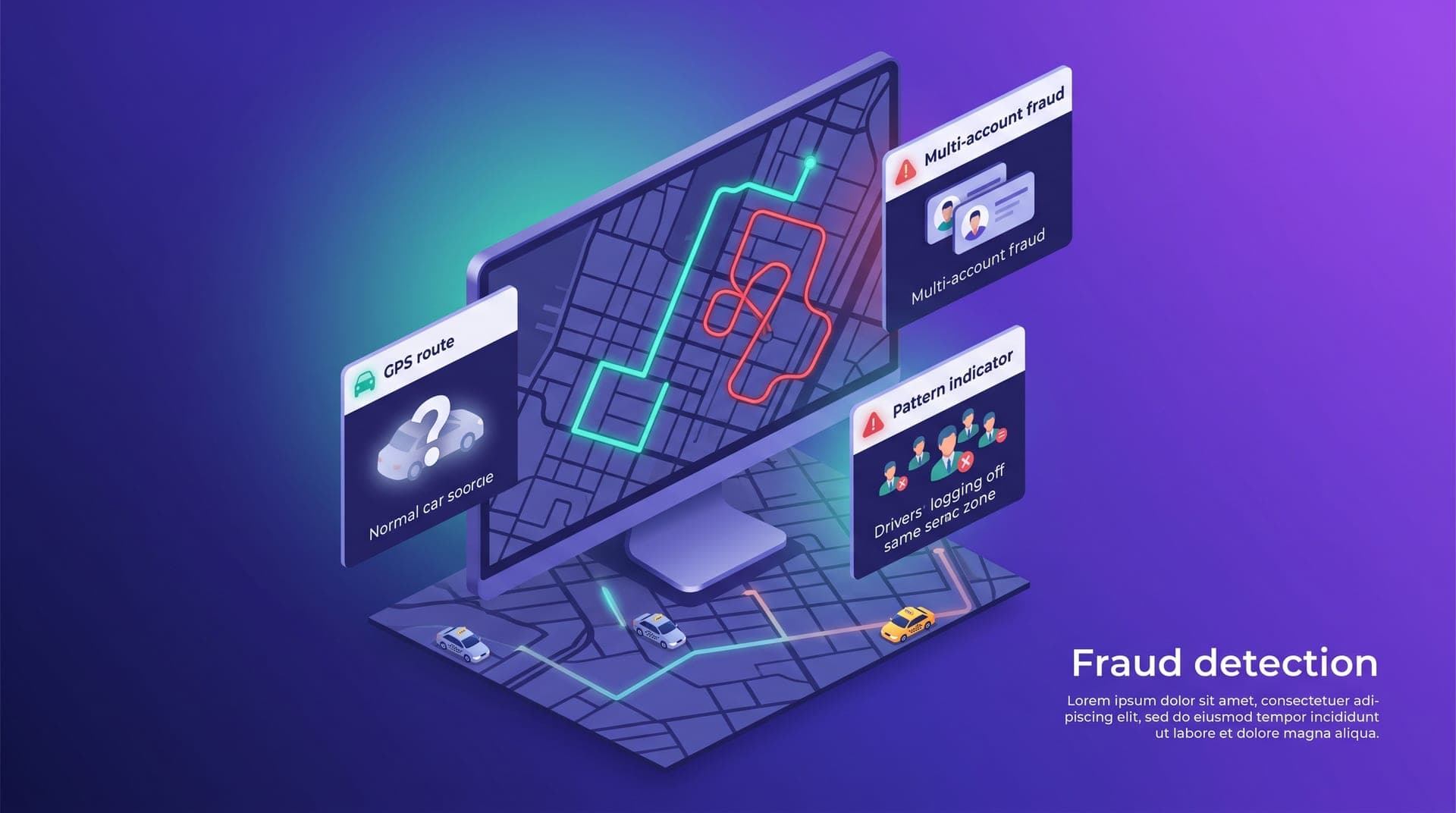

Multi-account fraud: one driver, two profiles, double benefit

Multi-account fraud is more common in platforms with activation or referral bonuses than in operations without monetary entry incentives. The driver creates a second account using documentation from a family member or acquaintance who agrees to lend it, and operates both alternately: the main account in high-demand zones and hours, the secondary one to collect activation bonuses without the main profile showing up as the beneficiary. In markets without mandatory biometric verification, the average time to set up a functional secondary account is two to four days.

The most direct detection doesn't require biometric analysis — it requires cross-referencing device data. If two different driver accounts share the same device ID, the same associated phone number, or show connection and disconnection patterns in the same time blocks from the same geographic location, the probability that it's the same driver running both is high. Platforms that enable systematic device ID verification detect between 2% and 6% of duplicate accounts in operations with more than 100 active drivers. Those sharing both device ID and geographic and temporal pattern require direct verification before remaining active.

Selective cancellations: rejecting trips without it registering as a rejection

Strategic cancellation doesn't aim to steal money directly — it aims to optimize the driver's experience at the cost of the passenger and the operation. The most common pattern: the driver accepts the request so it doesn't count as a rejection in their acceptance rate, reviews trip details after accepting, and if the destination is inconvenient — long trip outside their zone, destination in a low-return-demand area, low fare with extended distance — cancels before reaching the passenger, citing a technical reason. From the dashboard it shows as one more cancellation. From the passenger's perspective, it's the second or third time it's happened on the same app in the same week.

The alert signal isn't the cancellation count — it's the time between acceptance and cancellation cross-referenced with trip type. A driver who consistently cancels within 90 to 120 seconds of accepting, and does so disproportionately on trips over 15 minutes or toward certain zones, shows a selective cancellation profile, not a technical or availability one. Identifying that profile in drivers with normal average cancellation rates but with concentration on long or low-revenue trips is the first intervention point before the problem degrades passenger retention.

Referral fraud: how poorly designed bonuses exploit themselves

Referral fraud appears almost exclusively when the operator has an active incentive program paying at the moment of registration or first driver activation. The pattern is predictable: the referring driver persuades someone without real intent to work on the platform — a family member, a friend, sometimes a paid contact — to register and complete the minimum trips that trigger the bonus, then disappear. The referring driver collects the incentive. The platform loses the bonus cost plus the onboarding time of a driver who was never going to stay.

Incentive design is the first line of defense. A bonus paid when the new driver completes 30 active days and maintains a minimum 4.2 rating is structurally resistant to this pattern because it demands sustained behavior that a fictional driver can't simulate for a full month. A bonus paid on completing 10 or 15 trips in the first week is exploitable in 48 to 72 hours. The difference between the two isn't just fraud risk — it's the quality of driver each structure attracts. Fast-activation incentives attract bonus drivers, not platform drivers.

Four weekly reviews that cover all five patterns

Most regional operators don't have a dedicated security team — they have the same operations manager who handles everything else. The goal isn't to build a sophisticated detection system from day one; it's to establish four reviews that can be completed in 20 to 30 minutes per week and cover all five patterns with enough lead time to intervene before they escalate.

The four reviews that catch fraud before it becomes costly:

- Review trips weekly for flat or time-inconsistent GPS traces — any trip where GPS distance is less than 30% of charged distance requires direct verification with the driver

- Filter connection-disconnection logs by zone: clusters of three or more drivers going offline within the same 10-minute window in the same zone more than twice a week warrant review

- Cross-reference device IDs on new accounts against existing records — two active accounts with the same device ID are an immediate alert that doesn't wait

- Analyze each driver's cancellation profile: identify who cancels within 90 seconds of accepting and on what trip type, cross-referencing destination and distance to surface the selective pattern

The intervention threshold doesn't have to be certainty of fraud — it has to be enough anomaly to justify a direct conversation with the driver. Most anomalies have legitimate explanations: a GPS that doesn't work in poor-signal zones, a genuine cancellation due to a vehicle issue, a referral who tried the platform and chose another option. What doesn't have a legitimate explanation is a consistent pattern in the same coordinates, at the same times, with the same drivers. Fraud is almost always a repeated pattern, not an isolated event.

For the first two months I saw nothing. In the third month I started noticing a driver who always showed completed trips in zones where I couldn't see a car moving on the map. When I checked the GPS, the trace didn't match the times. Same driver, three or four times a week.

The five patterns described here — ghost trips, surge manipulation, multi-account fraud, selective cancellations and referral fraud — aren't exclusive to large operations or poorly managed platforms. They appear in any operation that reaches enough volume for fraud to become profitable, which typically happens between months 2 and 5. The operator who knows them before they appear is positioned to catch them in their earliest form, before they become a meaningful share of monthly revenue.

The difference between an operation that absorbs fraud without seeing it and one that detects and contains it isn't technological — it's a review habit. The four weekly checks described here don't require sophisticated infrastructure: they require someone to run them consistently. Fraud doesn't scale where it can't hide. An operation that reviews its logs regularly isn't an attractive target for any of these patterns — precisely because all of them require repetition to be profitable, and repetition is the first thing a systematic review surfaces.